7 Big Winners in the U.S. Big Data Drive

As you might have already heard, the United States government last week put forth a broad effort called the Big Data Initiative, which seeks to expand the arsenal of tools for grappling with massive data and to fund projects that are exploiting data in meaningful ways.

The focus of this inter-agency collaboration is, as the White House stated, on improving “the tools and techniques needed to access, organize and glean discoveries from huge volumes of data.” OSTP officials narrowed the policy action down to three bullets that define the inter-agency approach. These include:

The focus of this inter-agency collaboration is, as the White House stated, on improving “the tools and techniques needed to access, organize and glean discoveries from huge volumes of data.” OSTP officials narrowed the policy action down to three bullets that define the inter-agency approach. These include:

- Advance state of the art core technologies needed to collect, store, preserve, manage, analyze and share huge quantities of data.

- Harness these technologies to accelerate the pace of discovery in science and engineering, strengthen our national security and transform teaching and learning.

- Expand the workforce needed to develop and use big data technologies.

While you can read more about the specific policies that underlie the $200 million big data call to action here, we wanted to highlight a few select projects that will remain on our radar as they unfold over the next few years of funding.

In reality, there were far too many projects to name in a short set of vignettes, but the following seven represent some of the more compelling and mission-critical among the funded concepts.

Let’s begin…..

FIRST PROJECT– What DARPA has in store for big data >>>

#1 DARPA XDATA Program

The Defense Advanced Research Projects Agency (DARPA) is just getting rolling with its XDATA program, which they say will be funded to the tune of $25 million annually for four years.

The XDATA program was announced on March 29, 2012, by the White House Office of Science and Technology Policy and the National Coordination Office for Networking and Information Technology Research and Development as part of the “Big Data” initiative.

DARPA’s XDATA program supports a whole-of-government effort to coordinate management of big data technology and better use the volumes of data collected by federal agencies.

The purpose of the XDATA program is to develop computational techniques and software tools for analyzing large volumes of structured and unstructured data (tabular, relational, textual, etc). The organization states a number of goals as central to the project including:

Developing scalable algorithms for processing imperfect data in distributed data stores and creating human-computer interaction tools for more accurate, instant “visual reasoning” for mission-critical operations.

According to a DARPA statement a program like XDATA is critical in the face of battle and mission-critical operations. As the agency writes…

Current DoD systems and processes for handling and analyzing information cannot be efficiently or effectively scaled to meet this challenge. The volume and characteristics of the data, and the range of applications for data analysis, require a fundamentally new approach to data science, analysis and incorporation into mission planning on timelines consistent with operational tempo.

Additionally, the project will support “open source software toolkits to enable flexible software development for users to process large volumes of data in timelines commensurate with mission workflows of targeted defense applications.”

NEXT PROJECT — DOE Visualizes Excellence…. >>>

#2 The SDAV Institute

While it may not translate into the most graceful-sounding acronym, the DOE’s Scalable Data Management, Analysis and Visualization Institute at Berkeley Lab is set to bring the art and science of big data into new focus.

The program is part of a $25 million five-year initiative to help scientists better extract insights from today’s increasingly massive research datasets. SDAV will be funded through DOE’s Scientific Discovery through Advanced Computing (SciDAC) program and led by Arie Shoshani of Lawrence Berkeley National Laboratory (Berkeley Lab).

According to a statement, “SDAV aims to deliver end-to-end solutions, from managing large datasets as they are being generated to creating new algorithms for analyzing the data on emerging architectures.

Finally, new approaches to visualizing the scientific results will be developed and deployed based on other DOE visualization applications such as ParaView and VisIt.”

SDAV, which builds on progress made by other SciDAC projects, is a collaboration tapping the expertise of researchers at Argonne, Lawrence Berkeley, Lawrence Livermore, Los Alamos, Oak Ridge and Sandia national laboratories and in seven universities: Georgia Tech, North Carolina State, Northwestern, Ohio State, Rutgers, the University of California at Davis and the University of Utah. Kitware, a company that develops and supports specialized visualization software, is also a partner in the project.

On a side note, the institution reigned in other project funding worth noting as part of the Big Data Initiative, including funds for National Science Foundation’s “Expeditions in Computing” program. The five-year NSF Expedition award to UC Berkeley will fund the campus’s new Algorithms, Machines and People (AMP) Expedition. AMP Expedition scientists expect to develop powerful new tools to help extract key information from big data.

NEXT PROJECT — ARMING Climate Researchers… >>>

#3 An ARM for Climate Research

The Department of Energy backed Biological and Environmental Research Program (BER) has created the Atmospheric Radiation Measurement (ARM) Climate Research Facility. The facility will serve as a multi-platform scientific hub for collecting and globally operating on large climate data sets.

The primary ARM objective is improved scientific understanding of the fundamental physics related to interactions between clouds, aerosols, and radiative feedback processes in the atmosphere; in addition, ARM has enormous potential to advance scientific knowledge in a wide range of interdisciplinary Earth sciences.

According to the organization, the facility will offer the “international research community infrastructure for obtaining precise observations of key atmospheric phenomena needed for the advancement of atmospheric process understanding and climate models.”

The team behind the projects notes that the data itself is massive in size and hugely important for current research efforts, serving as the resource for over 100 peer-reviewed papers per year. They say that challenges with collecting and making use of data that with high temporal resolution and spectral information features requires numerous resources, funding and development-wise.

With many collection sites that intake diverse data types, the center represents some of the most sophisticated sets of problems in big data—dealing with variable data sets in a distributed fashion. The funding will help the center stay on top of the newest sensor and data handling technologies.

NEXT PROJECT – USGS Preps for Massive GeoData…>>>

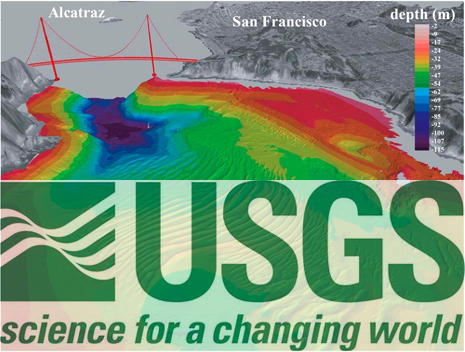

#4 Big Data for Earth System Science

When it comes to big data, there are few research areas finding themselves swimming in complex information quite like the geological sciences.

From climate change to earthquake response paradigms to real-time response capabilities for natural disasters, there is tremendous need for new investments in technologies that fall under the purview of the USGS.

The US Geological Survey has doled out grants to fund big geological science projects via its John Wesley Powell Center for Analysis and Synthesis. According to the center, it hopes to spur new ways of thinking about Earth system science by providing scientists with “a place and time for in-depth analysis, state of the art computing capabilities, and collaborative tools invaluable for making sense of huge data sets.”

Some examples of accepted projects using “big data” are:

- Understanding and Managing for Resilience in the Face of Global Change – This project will use Great Lakes deep-water fisheries and invertebrate annual survey data since 1927 to look for regime shifts in ecological systems. The researchers will also use extensive data collected from the Great Barrier Reef.

- Mercury Cycling, Bioaccumulation, and Risk Across Western North America – This project relies on extensive remote sensing data of land cover types to model the probable locations of mercury methylation for the western U.S., Canada and Mexico. Through a compilation of decades of data records on mercury, the scientists will conduct a tri-national synthesis of mercury cycling and bioaccumulation throughout western North America to quantify the influence of land use, habitat, and climatological factors on mercury risk.

- Global Probabilistic Modeling of Earthquake Recurrence Rates and Maximum Magnitudes – This project will use the Global Earthquake Model (GEM) databases, including the global instrumental earthquake catalog, global active faults and seismic source data base, global earthquake consequences database, and new vulnerability estimation and data capture tools. The research goal is to improve models of seismic threats to humanity, and base U.S. hazard estimates on a more robust global dataset and analysis.

NEXT PROJECT — Homeland Security’s New Weapon…>>>

#5 Department of Homeland Security & CVADA

The Department of Homeland Security and a collaboration between Rutgers and Purdue University researchers called the Center for Excellence on Visualization and Data Analytics will use its funding to advance response methodologies in the wake of natural disasters or other calamities.

This research effort focuses on the large amounts of data that first responders might be able to harness as they analyze the appropriate response to attacks, man-made and natural.

The Department of Homeland Security Science & Technology (S&T) Directorate Centers of Excellence (COE) network is an extended consortium of hundreds of universities generating ground-breaking ideas for new technologies and critical knowledge, while also relying on each other’s capabilities to serve the Department’s many mission needs.

All Centers of Excellence work closely with academia, industry, Department components and first-responders to develop customer-driven research solutions to ‘on the ground’ challenges as well as provide essential training to the next generation of homeland security experts. The research portfolio is a mix of basic and applied research addressing both short and long-term needs. The COE extended network is also available for rapid response efforts.

NEXT PROJECT: Healthy Tools for the CDC…>>>

#6 Big Data for the CDC

The Centers for Disease Control, which functions under the Department of Health and Human Services, is set to receive funding for two projects that will make actionable intelligence out the massive amounts of health and wellness data they have collected over the years.

The first project, called BioSense 2.0, is, as the center describes it, the first system to take into account the feasibility of regional and national coordination for public health situation awareness through an interoperable network of systems, built on exiting state and local capabilities.”

The CDC says that BioSense will reduce the costs of having one “monolithic physical architecture” while still benefitting from the distributed nature of the system. This will lead to more streamlined hardware, but will necessitate some appropriate analytical and general framework changes to accommodate the growing data and new infrastructure.

A separate project that is finding funding after the government’s Big Data Initiative is the CDC’s Special Bacterial Reference Laboratory. The aim of the lab is to identify and classify unknown bacterial pathogens for rapid outbreak detection.

The key to this is “networked phylogenomics for bacteria and outbreak ID.” As the CDC describes, “phylogenomics will bring the concept of sequence-based ID to an entirely new level in the new future with profound implications on public health.” They say the development of a pipeline for new species ID will allow for multiple analyses on a new or rapidly emerging pathogen to be performed in hours, rather than days or weeks.”

NEXT PROJECT – DARPA’s Reading Machines….>>>

#7 The Machine Reading Program

Also a DARPA effort, this program seeks to replace expert human data handlers with smart machines that can “read” and process natural language and insert it into AI databases.

According to DARPA, if it’s successful, the program could yield language-understanding technology that will automatically process text in near real-time for mission-critical operations.

As DARPA describes, “developing learning systems that process natural text and insert the resulting semantic representation into a knowledge bases rather than relying on expensive and time-consuming current processes for knowledge representation that require expert and associated knowledge engineers to hand-craft information.”

The Machine Research program is in its final phase and is expected to conclude at the end of FY 2012. The program developed and evaluated numerous innovative prototypes and has laid the technical foundation for future research and development in operational-scale language-understanding capabilities.

Want to start over by learning what the government’s Big Data Initiative was all about? Read more here….