Raising a Platform to Meet New Verticals

Despite wide technological and industry chasms, there is a growing sense of universality when it comes to the general needs big data technologies are fulfilling. While companies in this ever-growing space tend to have their eyes on a key set of verticals, it’s often not a stretch to extend the usefulness of their offerings outside of their core markets.

A good example of this universality is embodied by Metamarkets. The company’s claim is that they can leverage the three keywords of today’s big data craze (Hadoop, cloud and in-memory) to power the events-based needs of big media, social and gaming companies at web scale.

A good example of this universality is embodied by Metamarkets. The company’s claim is that they can leverage the three keywords of today’s big data craze (Hadoop, cloud and in-memory) to power the events-based needs of big media, social and gaming companies at web scale.

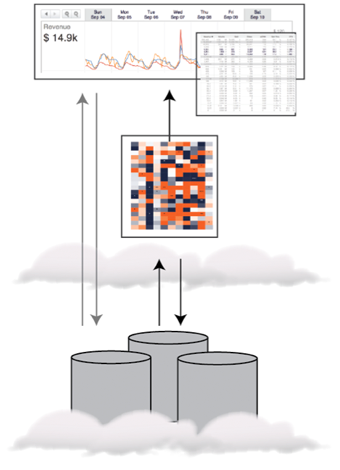

Metamarkets brings a purpose-built analytics engine to the table, which is delivered as a cloud-based service that harnesses Amazon’s platform, along with its Elastic Map Reduce, and S3. According to the company’s VP of marketing, Ken Chestnut, making us of the cloud enables his company to divert resources into the company’s in core technology (Druid comes to mind) instead of wasting cycles “reinventing the wheel” when it comes to cloud storage and computing infrastructure.

It could be a new day for once highly specific vertical-centered companies like Metamarkets that have purpose-built big data products and platforms that could find new appeal in verticals that might have once been completely out of reach. Chestnut told us this is due, in part, to a convergence of trends and needs that platforms like theirs, which were originally built to handle big web data at massive scale, were (unwittingly) made to serve.

While Chestnut wouldn’t name potential new market opportunities specifically, other than noting that they are still evaluating the full needs of other industries, he pointed to three threads that tie core industries to the outside world of enterprise big data needs, including:

- Ever-increasing volumes and sources of event-based data

- Current difficulties transforming that data into actionable insight using existing solutions

- Realization that tremendous competitive edge can be gained by shortening time to insight

Chestnut says these these challenges have become more acute for several reasons, the most important of which is that with more events and transactions moving online, there is greater opportunity to measure and quantify results in ways that are non-intrusive to end users. Accordingly, he says that companies have started “instrumenting” all aspects of their operations as a result.

He continued, noting that traditional systems were not designed to handle the volume, velocity, and variety of data that these companies are capturing. “The consequence is that data is being generated faster than it can be processed and consumed resulting in a longer time to insight (the lag time between when data is captured and when it is available for analysis).”

Chestnut noted that customers in the company’s core verticals, which include bigtime web publishing, social media and and gaming—all major event-based data-dependent verticals—all wanted the ability “slice and dice”, roll-up, drill-down on events-based data (click streams, ad impressions, user actions, etc.) by time, region, gender, and other considerations.

He said that at first, they investigated a number of relational- and NoSQL-based alternatives, but none of them achieved the speed and scale required. As a result, the company developed their own distributed, in-memory, OLAP data store, Druid.

As Chestnut told us, “To overcome performance issues typically associated with scanning tables, Druid stores data in memory. The traditional limitation with this approach, however, is that memory is limited. Therefore, we distribute data over multiple machines and parallelize queries to speed processing and handle increasing data volumes. Our customers are able to scan, filter, and aggregate billions of rows of data at ‘human time’ with the ability to trade-off performance vs cost.”

On that note, the concept of handling requests in “human time” is important to the company and plays into their strategy around Hadoop. Chestnut says that Hadoop is very complimentary to Metamarkets (and vice versa). “While Hadoop has tremendous advantages processing data at scale, it does not respond to ad-hoc queries in human time. This is where Metamarkets shines. We use Hadoop to pre-process data and prepare it for fast queries in Druid. When users log into Metamarkets, they can explore data in real-time without limits in terms of navigation or speed.”

April 25, 2024

- Cleanlab Launches New Solution to Detect AI Hallucinations in Language Models

- University of Maryland’s Smith School Launches New Center for AI in Business

- SAS Advances Public Health Research with New Analytics Tools on NIH Researcher Workbench

- NVIDIA to Acquire GPU Orchestration Software Provider Run:ai

April 24, 2024

- AtScale Introduces Developer Community Edition for Semantic Modeling

- Domopalooza 2024 Sets a High Bar for AI in Business Intelligence and Analytics

- BigID Highlights Crucial Security Measures for Generative AI in Latest Industry Report

- Moveworks Showcases the Power of Its Next-Gen Copilot at Moveworks.global 2024

- AtScale Announces Next-Gen Product Innovations to Foster Data-Driven Industry-Wide Collaboration

- New Snorkel Flow Release Empowers Enterprises to Harness Their Data for Custom AI Solutions

- Snowflake Launches Arctic: The Most Open, Enterprise-Grade Large Language Model

- Lenovo Advances Hybrid AI Innovation to Meet the Demands of the Most Compute Intensive Workloads

- NEC Expands AI Offerings with Advanced LLMs for Faster Response Times

- Cribl Wins Fair Use Case in Splunk Lawsuit, Ensuring Continued Interoperability

- Rambus Advances AI 2.0 with GDDR7 Memory Controller IP

April 23, 2024

- G42 Selects Qualcomm to Boost AI Inference Performance

- Veritas Strengthens Cyber Resilience with New AI-Powered Solutions

- CERN’s Edge AI Data Analysis Techniques Used to Detect Marine Plastic Pollution

- Alteryx and DataCamp Partner to Bring Analytics Upskilling to All

- SymphonyAI Announces IRIS Foundry, an AI-powered Industrial Data Ops Platform

Most Read Features

Sorry. No data so far.

Most Read News In Brief

Sorry. No data so far.

Most Read This Just In

Sorry. No data so far.

Sponsored Partner Content

-

Get your Data AI Ready – Celebrate One Year of Deep Dish Data Virtual Series!

-

Supercharge Your Data Lake with Spark 3.3

-

Learn How to Build a Custom Chatbot Using a RAG Workflow in Minutes [Hands-on Demo]

-

Overcome ETL Bottlenecks with Metadata-driven Integration for the AI Era [Free Guide]

-

Gartner® Hype Cycle™ for Analytics and Business Intelligence 2023

-

The Art of Mastering Data Quality for AI and Analytics

Sponsored Whitepapers

Contributors

Featured Events

-

AI & Big Data Expo North America 2024

June 5 - June 6Santa Clara CA United States

June 5 - June 6Santa Clara CA United States -

AI Hardware & Edge AI Summit Europe

June 18 - June 19London United Kingdom

June 18 - June 19London United Kingdom -

AI Hardware & Edge AI Summit 2024

September 10 - September 12San Jose CA United States

September 10 - September 12San Jose CA United States -

CDAO Government 2024

September 18 - September 19Washington DC United States

September 18 - September 19Washington DC United States